Matteo Nulli

Machine Learning Research & Engineering

Currently at eBay — Foundation Models Team, building adaptable multimodal models and efficient optimization pipelines for real-world applications.

Formerly at UvA, UTN, Bocconi, USYD.

about

👋 Hi, I am Matteo, an Applied ML Researcher at eBay with the Foundation Models Team. My work focuses on research and engineering in multimodal learning, inference optimisation and multimodal search reasoning with Dr. Shahram Khadivi.

💭 My current research interests lie in Efficient Multimodal Modeling. In the recent past, I focused on visual representation learning (here), adaptable VLM Architectures (here) and inference optimisation pipelines (here).

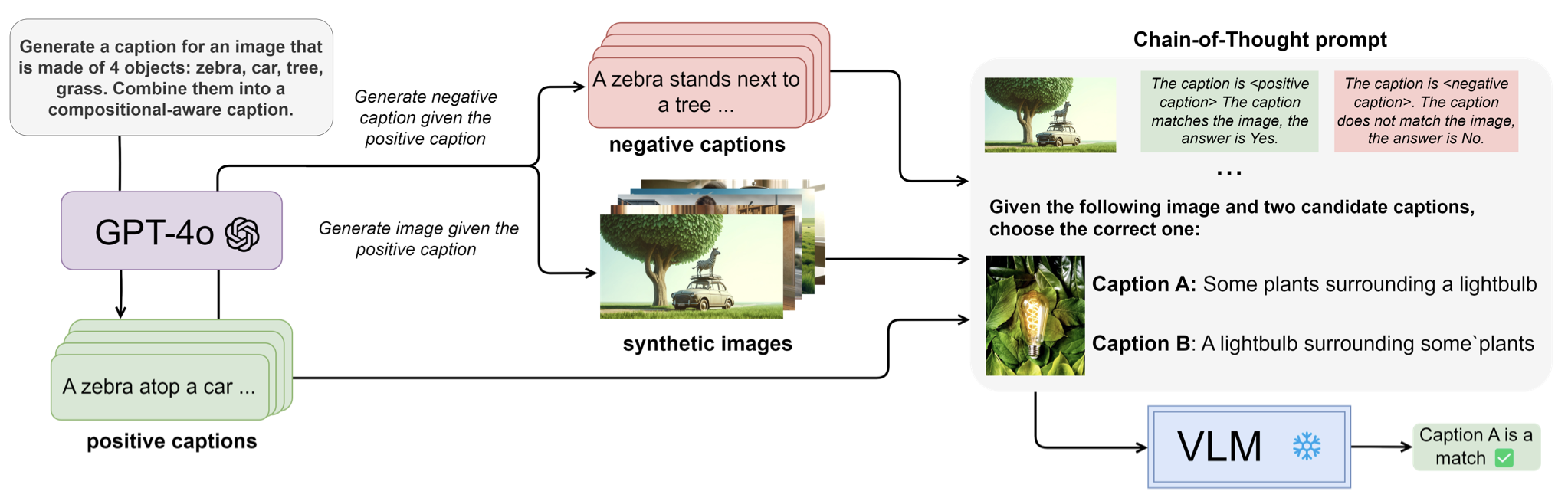

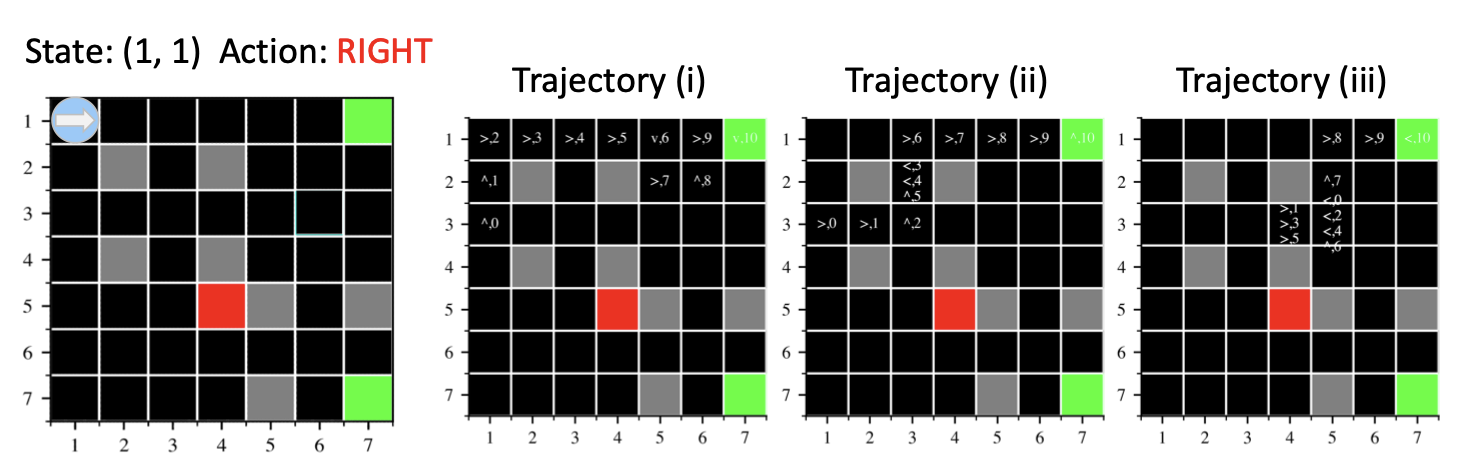

🔙 Previously, I graduated cum-laude ELLIS Honours from the MSc in Artificial Intelligence at the University of Amsterdam. I was lucky to be suprevised by Prof. Yuki Asano at FunAI Lab, Dr. Ivona Najdenkoska and Dr. Mohammad Mahdi Derakhshani to investigate Compositional Reasoning in Multimodal Foundation Models. I also spent time at eBay as Research Intern, supervised by Prof. Cees Snoek, working with Dr. Hadi Hashemi and Vladimir Orshulevich. Before that, I graduated from Bocconi University with a BSc in Mathematical and Computing Sciences for AI and was an exchange student in Applied Mathematics and Computer Science at the University of Sydney with a full-ride scholarship.

news

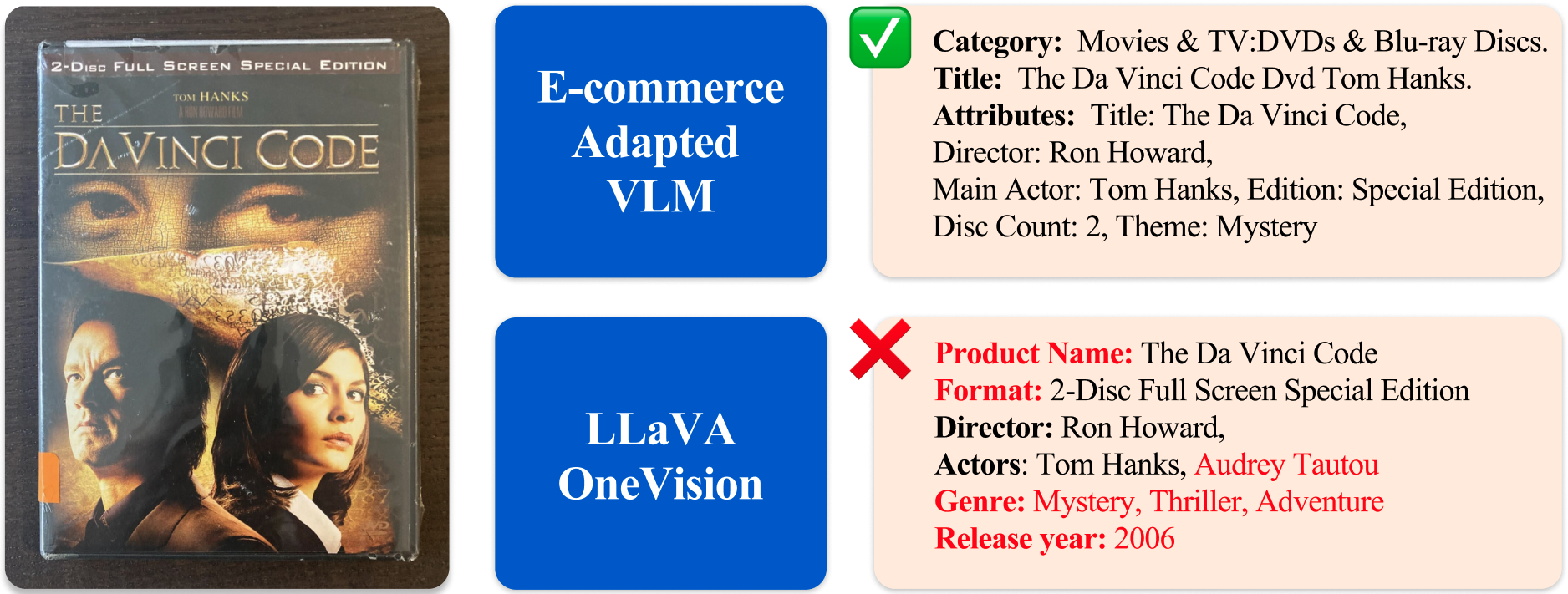

| Feb 10, 2026 | 📝 Our paper “Adapting Vision-Language Models for E-commerce Understanding at Scale” was accepted as Oral at EACL Industry Track, see you in Morocco! 🇲🇦 |

|---|---|

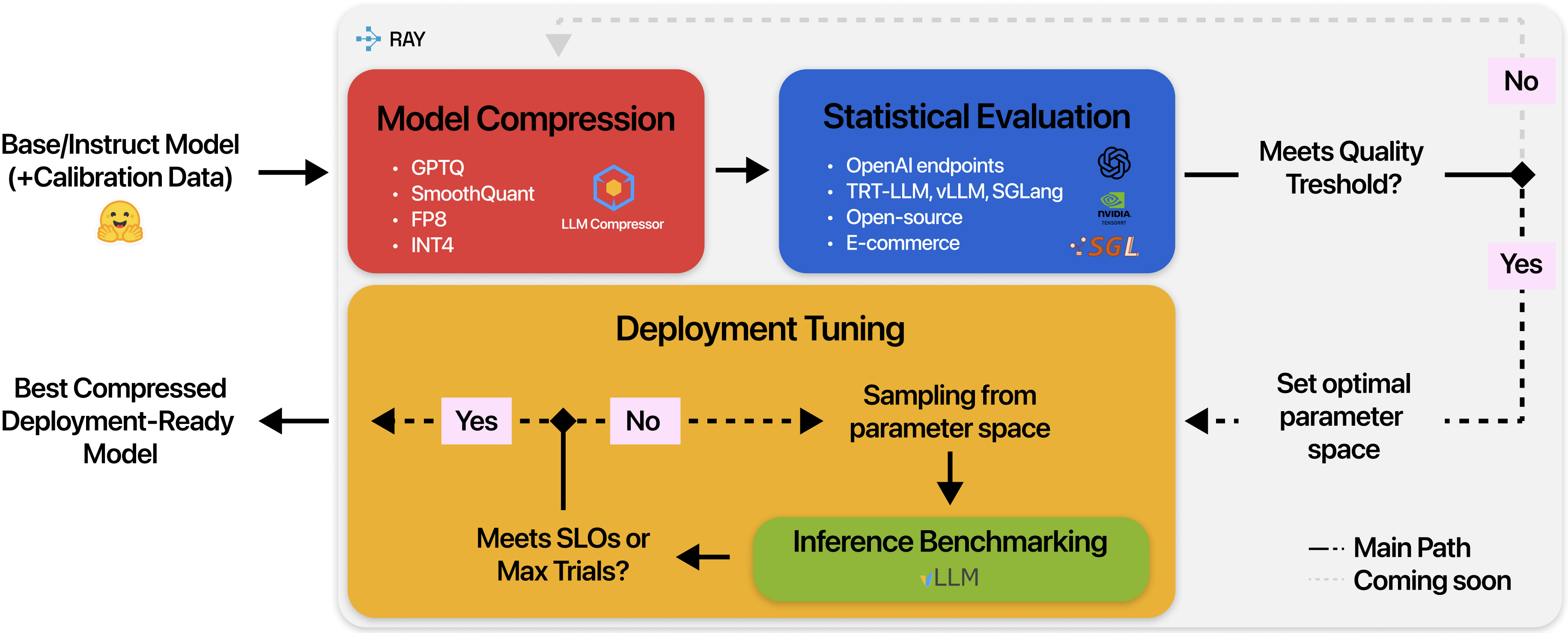

| Jan 29, 2026 | 📝 MLSys accepted our paper “Meeting SLOs, Slashing Hours: Automated Enterprise LLM Optimization with OptiKIT” for the Industry Track! |

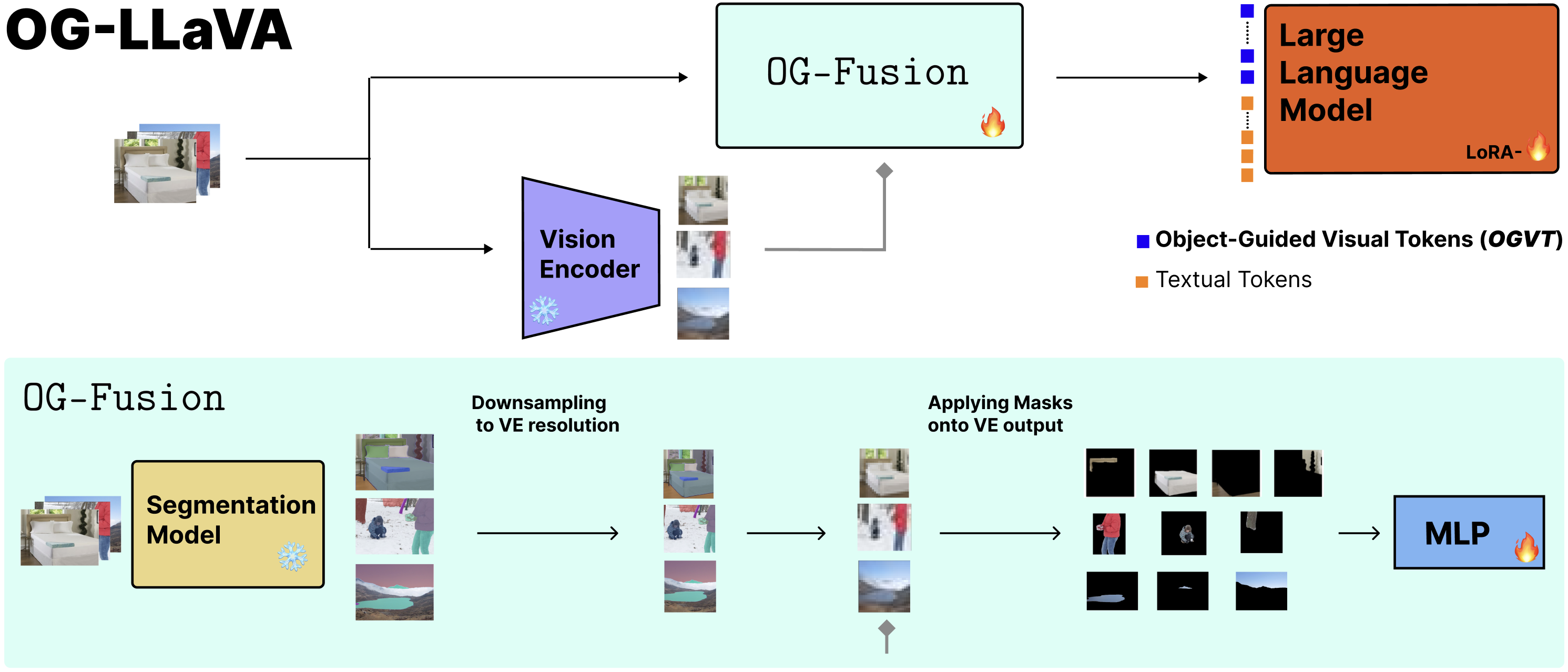

| Nov 28, 2025 | 📝 Going to EurIPS! Our paper Object-Guided Visual Tokens: Eliciting Compositional Reasoning in Multimodal Language Models was just accepted to EurIPS Principles of Generative Modelling Workshop, see you in Copenhagen. 🇩🇰 |

| Oct 29, 2025 | Happy to share I just graduated Cum-Laude and ELLIS Honours the Master of Science in Artificial Intelligence at University of Amsterdam! 🎓 |

| Aug 18, 2025 | Just started working as full time Applied Researcher at eBay Foundation Models Team with Hadi and Shahram. Excited to (re-)start. 👾 |

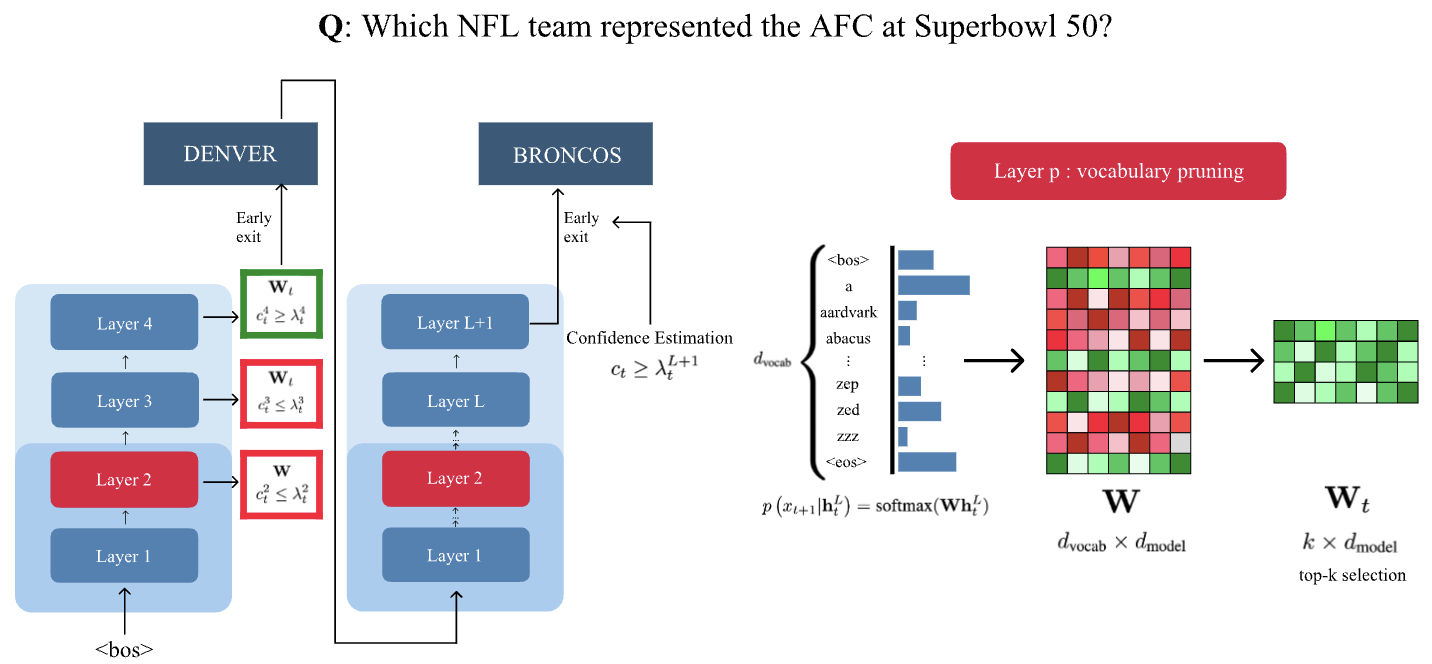

| Demystifying Multimodal Learning: Impact of Visual Tokens on Inference Latency A blogpost series on the nuts and bolts of Multimodal Learning Apr 24, 2026 |